Don’t you just hate it when you cannot find the content you are looking for? You know that you have relevant content, but either you can’t remember where it is, or you know where it is, but there is so much content that it will take ages to look at every file to find what you are looking for.

For years, organisations have had to make do with semi-functioning image search through the “manually tag every image first” process. An incredibly laborious, time consuming and let’s face it – more often than not – inaccurate exercise.

This blog post provides an insight into the exciting new cognitive image recognition and image search solutions that we are doing and how our customers are using it.

We Live in a Visual World

While it appears that image recognition or computer vision is a new concept, it has in fact been around since the late sixties. Universities started pioneering artificial intelligence by using cameras attached to computers to describe what it saw (Wikipedia).

The difference today is that it is a lot more prevalent. We live in a world where people have whole conversations using emoji icons, take pictures of what they had for lunch and post videos of themselves at music concerts. The world has become a lot more visual, partly because it’s fun and interesting, partly because people are constantly on the go so they don’t have the time to describe everything minute detail in a text message, but the big drivers are technology and social media.

Cloud-based AI Has Made Image Recognition Practical

Take Facebook, for example. They have introduced functionality that enables the blind to “see” what’s going on in a picture and explain it out loud. Pinterest now allows users to search for products with pictures instead of words, all without having to visit their website. Google’s Search by Image offers a similar experience. It lets users begin a Google search with an image. Even Apple has got in on the act with the new iPhone X by providing facial recognition.

It is, however, not just about taking pictures of cute puppies running around on green grass with red balls in their mouthes and then sharing them with friends or family.

It is now more than that. For example, not only does Amazon’s image recognition Automated Intelligence (Amazon Rekognition) identify that the image is of a dog with a ball, but it can also decipher which breed of dog it is. Microsoft provides the ability to search for words in images in OneDrive and SharePoint in Office 365. These technologies provide a powerful deep learning visual based search and image classification.

Why is Getting the Breed of Dog from a Picture Important?

Well it may not be to everyone. However, if you are a vet or work with animals, then you may not know every dog breed in the world, but you need this to do your job. To highlight the complexity, at the last count (according to the Fédération Cynologique Internationale (FCI)), there were 332 different breeds. The point is that there are very few humans who can identify every breed of dog whereas it is likely that a computer, using image recognition can.

The vast amount of computer power, huge data warehouses and advancements in technologies such as deep learning (a subfield of machine learning) and Artificial Intelligence mean that image recognition is more useful, attainable, affordable and applicable. Therefore, it is having a significant impact in the working world.

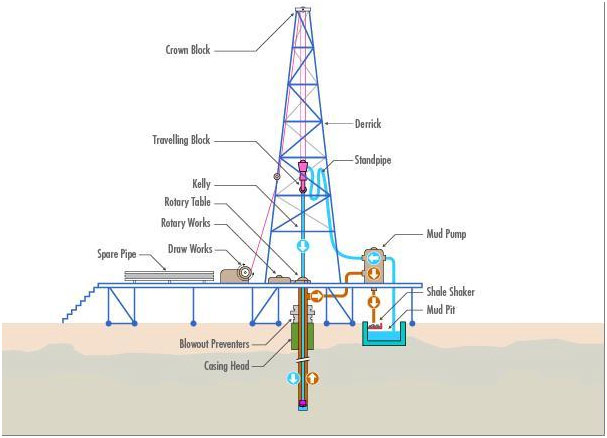

Another Example: Maintaining Oil Rigs

Here we have a picture relating to oil drilling which is handily labelled.

An oil worker may need to replace a specific part from the drill or rig. Image recognition can help identify the part that needs replacing.

Searching for images used to be about searching for keyword tags (metadata) that had been manually attached to each image by subject matter experts. Ultimately this is a time consuming, subjective process and potentially error prone exercise.

When users conduct a search they are, hopefully, presented with a set of images. They can look through each picture to find what they are looking for. Even then, they may not get the right image and the image may not provide the information that they are seeking.

AI training technologies such as Amazon Rekognition, Microsoft Cognitive Services and Google’s Cloud Vision Machine Learning Services, to name just a few, have databases containing billions of images tagged with keywords about what is inside pictures. (For a comparison of Google and Microsoft’s AI offerings, see BA Insight’s CTO – Jeff Fried’s presentation, “The Race is on: comparing Google and Microsoft’s Cognitive Services”). They use image detection algorithms to analyse and classify images into categories, without the need for human intervention. They detect objects, scenes, faces, etc. and even identify inappropriate content in images.

So, in the case of oil workers, they can simply conduct a search for the part that they are looking for and be presented with the applicable image. They could even upload a picture of the broken part which in turn would also return the correct part, even the part number, locations that stock the part and how to order. Thus, making the entire process a lot faster, simpler but most importantly – ensuring the correct replacement part is ordered.

The technologies can also help organisations with compliance and organisational standards i.e. content moderation. It is even possible to interpret emotions and sentiment in an image. At the last count, 34.7 billion images had been shared on Instagram (statisticbrain.com) meaning that companies like Google, Pinterest and Facebook are more than happy for users to freely upload pictures because they can use these images to train their deep learning networks to become more accurate.

Despite the billions of images that are feely available on the web and the prebuilt AI training technologies mentioned above, there is often a requirement for organisations to create their own algorithms, build their own Machine Learning solutions and/or train models themselves. This is all possible with solutions like TensorFlow and Microsoft Custom Vision Service. The ability to customise solutions using these open source models means that companies can apply organisational terms, labels and acronyms to company images and documents that contain images. Slaven Bilac’s blog “How to Classify Images with TensorFlow using Google Cloud Machine Learning and Cloud Dataflow” provides a brilliant technical insight into how you can build your own vision models using TensorFlow.

BA Insight’s Solution

It is not only individual images by themselves that use image recognition. Nowadays, images are also contained within documents. For example, using functionality such as Optical Character Recognition (OCR), it is possible to detect and then extract text within images. The oil drilling image above is a perfect example of this. Let’s say that this image was held within a PDF document called “Maintaining Oil Rigs.pdf”. Using a traditional search, when a user searches for the terms “replace mud pump”, the search would not return the document “Maintaining Oil Rigs.pdf”. The reason being, traditional searches do not index text held within images. Using image recognition, the text stored within the image can be extracted and used as part of the search process. Using the BA Insight SmartHub and BA Insight AutoClassifier features, we take this process even further.

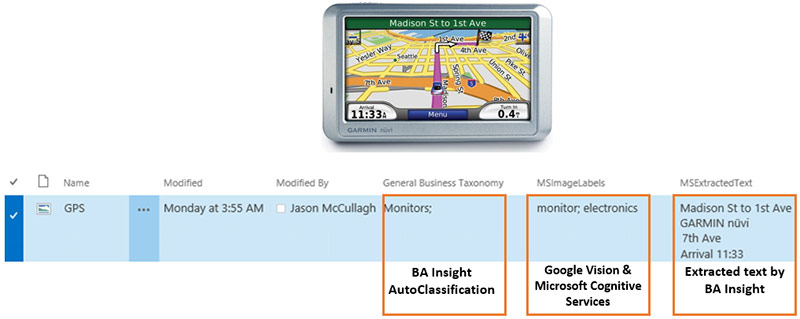

We also take the label output from solutions such as Google Cloud Vision and Microsoft Cognitive Services, along with open source technologies like TensorFlow, and classify them further based on a set of business rules to provide a high quality defined set of metadata tags.

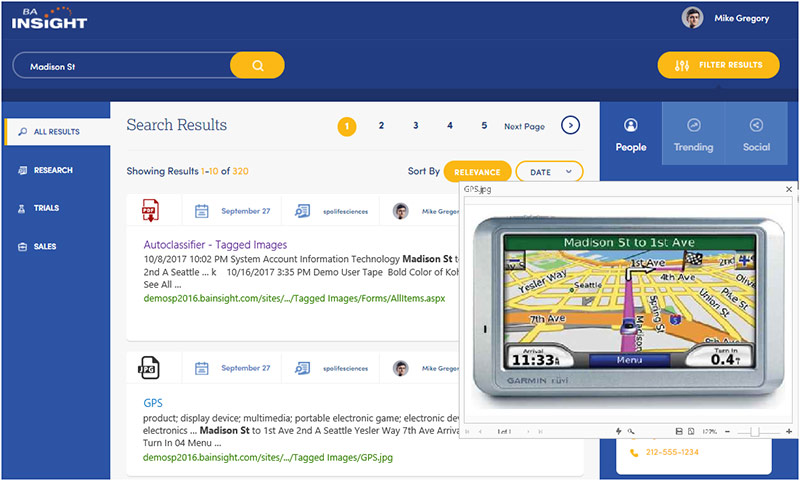

So, in the satellite navigation example, we extract the text from the image and assign metadata to the image which then means, when a user searches for the words “Madison St”, the image is returned in the search results.

Naturally and as we all know…. better metadata has a dramatic impact on the search relevancy. To find out more, take a look at Jeff’s blog – Adding Machine Learning – Introducing our New Release of AutoClassifier.

BA Insight is in the Real World

At BA Insight, we take a best-of-breed strategy to deliver the best solution. Take for example one of our oil and gas customers. They have inspectors/maintenance folks that go to every plant (and rig) with mobile tablets. They take notes and snap dozens of pictures per site and upload them into a SharePoint site.

The problem is that people don’t have the time to manually tag all the content that they need to refer to, as well as look for patterns across thousands of sites. This creates the age-old process of, “I cannot find what I am looking for” and often creates a breakdown in process which can be detrimental to the running of the rig or plant.

Through automated and contextual tagging, the BA Insight AutoClassifier provides the ability to define what each photo is about and even to recognise/highlight issues e.g. training a model to recognise tell-tale signs of equipment and machinery wear and tear. This process is run across all content, thus eliminating the need for the manual image classification process and ultimately enables users to find what they are looking for.

Better Search with Images

As humans, we visually interpret things naturally whereas we need to train computers to do this. It is highly unlikely that a computer will ever perform this as well as we can. This said, understanding and interpreting images in context means the transformation of visual images into descriptions of the world. This is subjective as people see things differently and in some cases e.g. my breed of dog example, humans may not be able to understand the image at all. This is the computer’s differentiator. Combining this with BA Insight’s tools, which can interface with other thought processes and elicit appropriate actions, means you have a powerful solution.

Ultimately, images are not replacing words, they are purely another way to capture content and hence a picture is worth a 1000 words. Technology such as image recognition and machine learning means that our search experiences are being greatly enhanced, and with new technology being developed all the time, our AI experiences will continue to grow.

There’s many more exciting things we will be doing with cognitive services. We are always interested in new ideas, applications and difficult challenges, so if you have questions or suggestions around cognitive search and machine learning, don’t hesitate to contact us.